Identifying Bacteria in Biological Samples by their Genomes

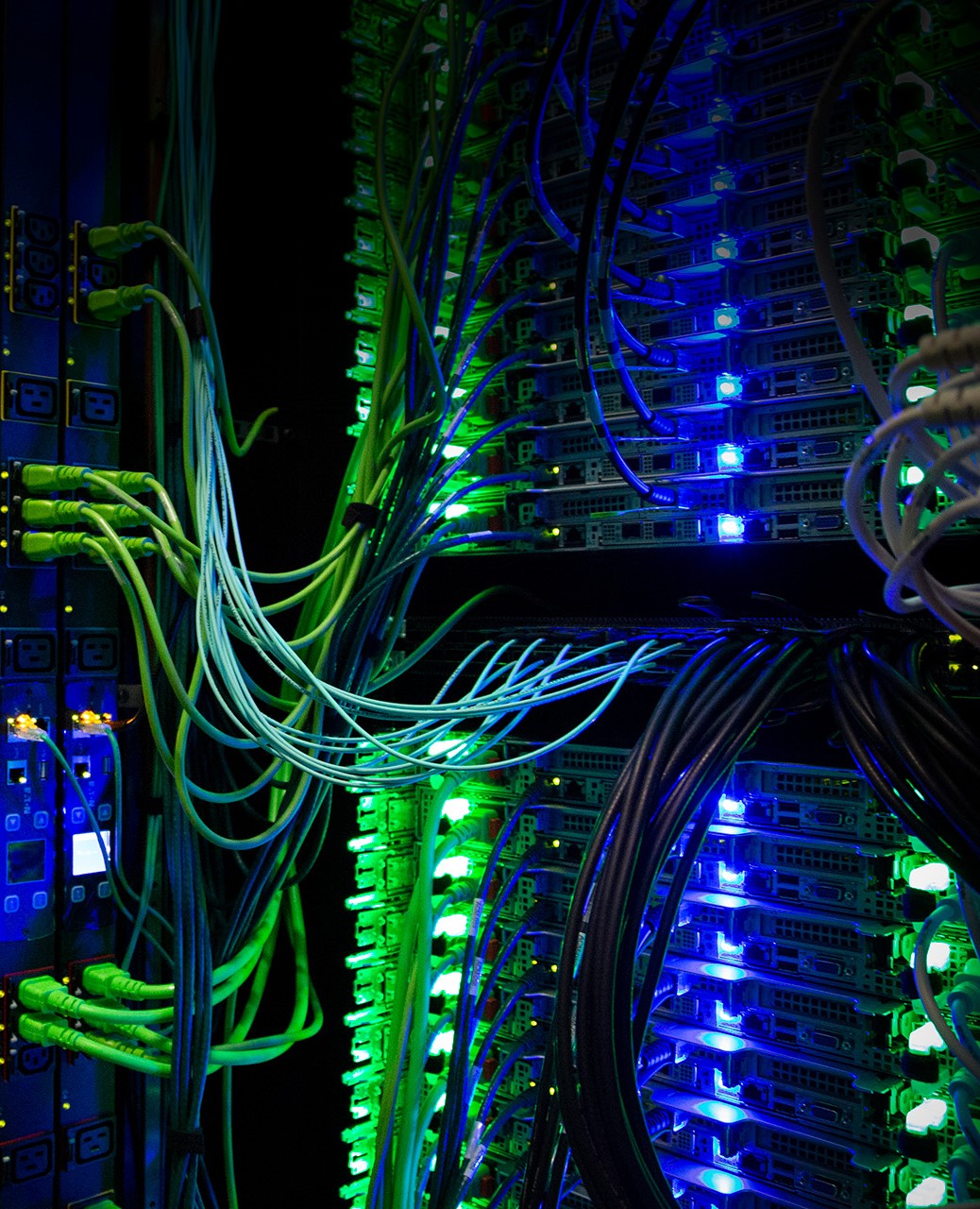

For more than a year and a-half, Dr. Matthew Scholz was a Research Specialist with Michigan State University’s BiCEP team. In 27014, he worked with the university’s High Performance Computing Center (HPCC) and its 6-terabyte RAM high-performance computer (HPC) to generate a database of the unique DNA signatures of the genomes of all bacteria that had been sequenced to date. That database is now being used to analyze biological material samples to reveal what types of bacteria are present — a number that could reach into the tens of thousands.

For more than a year and a-half, Dr. Matthew Scholz was a Research Specialist with Michigan State University’s BiCEP team. In 27014, he worked with the university’s High Performance Computing Center (HPCC) and its 6-terabyte RAM high-performance computer (HPC) to generate a database of the unique DNA signatures of the genomes of all bacteria that had been sequenced to date. That database is now being used to analyze biological material samples to reveal what types of bacteria are present — a number that could reach into the tens of thousands.

“It requires as much RAM as there are unique sequences in the known genomes,” Scholz said. “And new genomes are added all the time — several hundred a year. As it grows, the need for memory grows as well.”

Scholz said there are currently about 2,500 sequenced genomes, and that number is expected to increase exponentially as discovery and analytical tools get refined.

“The only barrier is cost to do it,” he said. “And that barrier is continuously going down.”

Scholz, now a Bioinformatics Scientist at Vanderbilt University, previously worked for the Los Alamos National Laboratory, with whom he collaborated on this project. The database is an essential part of GOTTCHA (Genomic Origins Through Taxonomic CHAllenge), a tool that compares next-generation sequencing data to the database to identify bacterial or viral presences.

“To generate the database, we used an algorithm that drew from all the known and sequenced bacteria in the database,” Scholz said. “It takes sequences of all the genomes and breaks them into base pair chunks that are 26 letters long. Then it does that for all bacteria in entire data pool. That’s easy.”

What takes time and memory, Scholz said, is the next step: The HPC then enables every fragment to be compared with those from every other bacteria, identifying and removing any that are shared between them. This comparison becomes more complicated every time you add a new genome.

“It becomes a matter of memory and time,” Scholz said. “Every genome has between a couple hundred thousand and a couple million pieces, all of which need to be compared [in RAM]. It becomes extremely expensive.”

He said the first time the method was used, the analysis took 10 days using 1.5 terabytes of RAM, and processed about 1,600 genomes. This time, by utilizing 5.4 terabytes on the new 6-terabyte, 96-processor HPC machine, it analyzed almost 2,700 genomes in the same 10-day span.

“It more than doubled memory requirement, and tripled speed,” he said. “It was impressive. We wouldn’t have been able to do this three years ago. We almost ran out of memory.”

Scholz said the most straightforward application of the data is to quickly and accurately identify the bacteria in biological sample.

“This can be very useful in human health,” he said. “If someone is ill, we might just need to take a sample from their saliva in order to identify any pathogens.”

A second use might be to understand the types and abundance of different bacteria/viruses in, on, or around your body. Research into the gut microbiome (the community of bacteria that live in the human digestive tract) has suggested links between these bacteria and many diseases and disorders, including obesity and depression.

The growth rate of the numbers of sequenced bacteria means that keeping the database current will require many more of these efforts. Scholz said the next stage in this process will be to try to make the database used by GOTTCHA easier to generate, using parallel computing. Then, each time a new bacteria gets sequenced, a new version of the database can be generated and shared. Reworking how the database is generated will also reduce the need for extremely large RAM machines in the future.

The generation of the database was facilitated by ICER’s purchase of a single memory, large RAM machine.

“We needed it for my shared research with Los Alamos,” Scholz said. “I knew we were about to generate a big database, which takes a lot of memory that few machines can handle. Los Alamos asked if I could run it at ICER, and it worked out.”

This cooperation, Scholz said, created a unique opportunity to enable the generation of the second iteration of the database. And this success paved the way for further partnerships, which would allow a positive feedback loop.

“This was a good collaboration between ICER and Los Alamos,” Scholz said. “National labs are a great resource for a lot of reasons. This couldn’t have been done easily most places. The availability for specialized hardware is rare.”